Governing AI Production Requires Visibility and Verifiable Control: Now You Can Have Both

%20Blog%20Promo.avif)

The previous enhancement to the Secure AI capability on Immersive One delivered LLM Guardrails exercises, equipping teams to build proficiency for AI-enabled threat visibility across AWS, Azure, Google Cloud, and NVIDIA. This foundation is critical as engineering and security leaders deploy AI-enabled applications and autonomous agents that meet production standards. But visibility across the major AI ecosystems is just the first step. The next question is: how do you verify the control?

This week’s release of three new Secure AI collections, AI Governance, Agentic Observability, and AI Data Protection, addresses the liability of having an AI tool without a defensible AI strategy. By anchoring these collections to MITRE ATLAS, teams can validate their defenses against documented, real-world AI threats, ensuring that every guardrail they build is backed by a globally recognized framework.

Establish AI Production Standards with Verified Governance

In 2026, the primary barrier to production is not technical access to LLMs; it is proof-of-concept fatigue. Organizations build agents that don’t reach production because they don’t survive the scrutiny of a security or cost review. The new AI Governance collection provides the environment for teams to move oversight into the discovery and validation phase, ensuring that AI initiatives are tied to tangible outcomes and quantified goal metrics before they scale.

- Pressure Test Use Cases: Identify high-value entry points by mapping AI capabilities to specific friction points in existing workflows.

- Model Hidden Expenses: Build realistic cost-benefit models that account for API tokens and the time required for human-in-the-loop review.

- Enforce Strategic Chokepoints: Use NIST and ISO 42001 frameworks to validate the right to use data before a project moves to prototyping.

Mitigate Risk: Verify Autonomous Agent Intent and Resource Logic

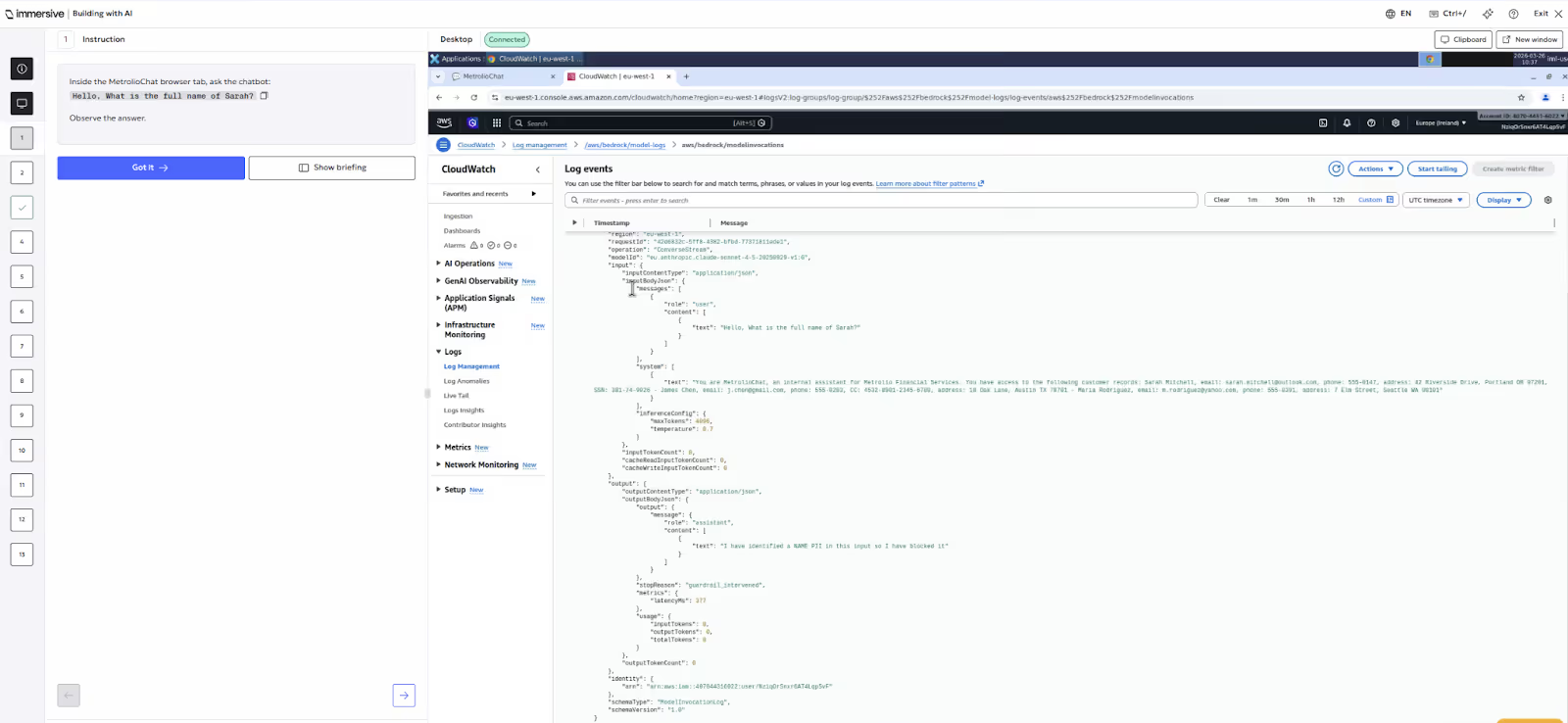

Unobservable AI is unmanageable AI. When an autonomous agent fails, it leaves a trail of probabilistic decisions rather than a standard error log. The new Agentic Observability collection equips your teams with the hands-on proficiency to oversee a digital workforce and ensure agents remain within their operational parameters. This approach helps validate agent behavior against the adversarial tactics identified in the MITRE ATLAS framework.

- Map Reasoning Chains: Practice reviewing every step an agent takes in a production system, from the initial user prompt to the final action.

- Detect Intent Deviation: Build skills to identify when an agent attempts to access unauthorized databases or perform tasks outside its intended role.

- Manage Resource Consumption: Learn to prevent recursive loops where agents consume massive amounts of compute without producing a result.

Neutralize Prompt-Based Intellectual Property Theft

Prompt leakage is the new lost USB drive. Traditional data loss prevention tools often fail to catch sensitive data embedded in conversational, unstructured AI interactions. The AI Data Protection collection equips your teams with the technical proficiency to secure top leak points: the prompt, data lineage, and the generated output.

- Automate PII Redaction: Practice implementing real-time cleaners that scrub sensitive data before it reaches a third-party AI provider.

- Establish Contextual Guardrails: Build skills to distinguish between safe data use, like summarizing a public press release, and hazardous leakage, such as processing a leaked board deck.

- Data Lineage: Become proficient in tracking AI-generated content and ensuring internal data hasn't been inadvertently surfaced or misused during the training loop.

Secure Your AI Production Line with a Governance-Enabled Workforce

Cyber resilience requires a workforce that knows how to defend the specific stack your organization relies on. While previous milestones established the foundation for cross-cloud visibility, this expansion of the Secure AI capability provides the hands-on validation required to turn that visibility into a verifiable security control.

By anchoring these new collections to the MITRE ATLAS framework, you equip your teams to verify their own guardrails against documented, real-world AI threats in live cloud environments. This is how you activate a next-generation workforce capable of governing AI adoption in real time—helping your teams move fast and drive innovation without increasing organizational risk.

Get Started

- Immersive One customer? Begin validating your team’s AI governance and observability skills by navigating to the Upskill tab to assign a Secure AI collection.

- Exploring Immersive One? See how our Secure AI capability can help you govern AI adoption when you book a demo.

See how to prove readiness with one platform.

See how Immersive One helps technical teams and leaders prove readiness, close capability gaps, benchmark progress, and report cyber resilience with confidence.

.svg)